Simply deploying your application in the cloud doesn’t make it cloud native. Cloud native applications are built from the ground up to leverage the strengths of cloud computing — such as its on-demand availability, continuous delivery, and scalability.

Cloud nativity is a core component of most modern software development, and it’s widely used in the Internet of Things (IoT). But there’s a lot to unpack, and if your organization is considering a cloud native approach to your application, you’ll want to be familiar with the principles, philosophies, and components cloud nativity entails.

In this guide, we’re going to cover:

- What the cloud actually is

- How cloud native applications work

- The benefits of cloud native applications

- How cloud native relates to the Internet of Things

For starters, what is the cloud?

What is the cloud?

“The cloud” is a term for servers, software applications, and databases you access over the internet. It’s an on-demand IT infrastructure. Since all of the actual computing and storage happens in this off-site network, users and developers can utilize the software from any device that can connect to the cloud.

While the cloud is often used synonymously with the internet, there actually isn’t just one cloud. There are numerous clouds. Some of them are public, some are private, and some are a combination of the two.

Public cloud

Cloud service providers like Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform enable businesses to build cloud-based applications without having to manage the infrastructure behind it. These are known as “public clouds,” which many companies and end users share as “tenants.”

In software development, using a public cloud can drastically reduce costs by removing the burden of maintaining (and securing) datacenters, investing in infrastructure, and employing IT professionals. This is the most common model for cloud computing.

Public clouds offer your business nearly infinite scalability and availability. Providers have large, distributed networks of servers to reduce the impact of outages and failures, and their on-demand resources can grow with your business.

Note: With cloud service providers, you’ll sometimes see the term “platform as a service” (PaaS) or “infrastructure as a service” (IaaS). These are essentially two models providers use to lend their computing resources to companies. You’re paying for the service of using their infrastructure. The difference is that a PaaS provider equips you with the framework and development tools you need to build applications fast, but IaaS providers focus on storage and computing resources.

Private cloud

Since a cloud is just a network of servers, databases, and software applications you access over the internet, businesses can create their own private clouds, too. Unlike a public cloud, a private cloud can be based on your company’s on-site infrastructure. (Third-party providers can also create private clouds for your organization.)

You still access your servers, databases, and software via the internet, but the difference is that your organization has complete control over it (and if you own the hardware, you’re responsible for maintaining and securing it). Government organizations and large businesses with sensitive data often choose to use private clouds to create more privacy.

Hybrid cloud

Businesses that have already invested in an IT infrastructure or have regulatory requirements they have to meet will sometimes use a “hybrid cloud,” which is essentially two separate clouds (one private and one public) that your app and services can move between.

This approach to cloud computing lets businesses choose to deploy updates and services to either cloud, and lets you keep sensitive data on your private servers while still scaling up computing and processing power as needed.

How do cloud native applications work?

Unlike software that you simply deploy in the cloud, cloud native applications (and the organizations that produce them) are built to leverage the advantages of cloud computing. Several key components set them apart. While they aren’t all “requirements” of cloud native applications, they’re development choices these applications tend to have in common.

Containers

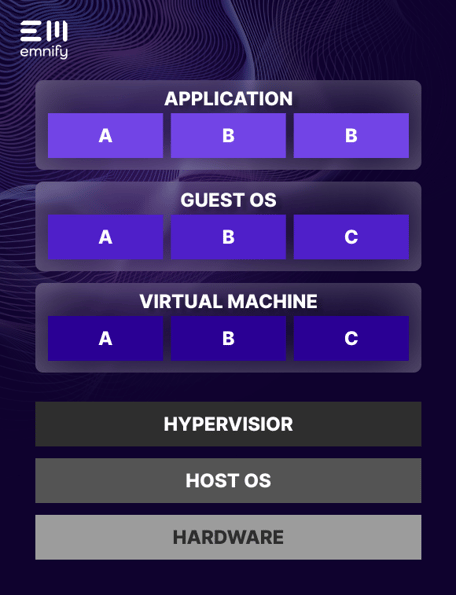

Most cloud native applications use a “container-based architecture” as opposed to a “virtual machine architecture.” Virtual machines divide up your infrastructure, while a container-based architecture divides up your application.

A virtual machine creates a digital clone of your computing infrastructure. There can be multiple virtual machines running on a single “host” operating system, sharing the same underlying hardware, but each virtual machine requires its own “guest” operating system to run an instance of your application. These virtual machines are managed by a hypervisor, which manages how each virtual machine uses the actual computing infrastructure.

Confused? Here’s what it looks like:

It’s a lot more complex than it needs to be. And while this may look tidy, it’s pretty messy in practice. Since virtual machines digitally duplicate your entire infrastructure, each one hogs (and competes for) a lot of resources.

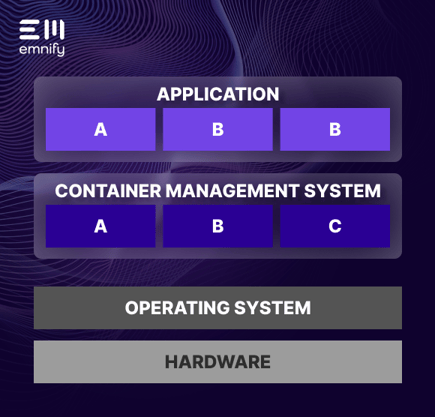

Containers break your application into individual components, all of which are managed by a single container management system (such as Google Kubernetes or AWS Elastic Container Service). The container management system runs like a single app on your operating system, and it allows the containers to communicate and share hardware resources efficiently.

Containers are extremely lightweight and resource-efficient. While every virtual machine requires a dedicated operating system, many containers can share a single OS.

Here’s what it looks like:

In cloud computing, container-based architecture is one of the key concepts that make these apps so scalable, reliable, and easy to deploy. And if you’re using a public cloud (or a private cloud from a provider), it also makes your application more cost-effective. Since you’re paying for the infrastructure you use, using computing resources more efficiently lowers your infrastructure costs.

With every new deployment, you don’t need to transport vast quantities of data for the operating system--you just deploy the application.

Microservices

Microservices go hand-in-hand with containers. Container-based architecture divides your application into smaller buckets, then manages their resource consumption with a container management system. Microservices break down your application into individual components (or services), and best practice is to run each microservice within a container. Instead of a single monolithic application, your software becomes numerous tiny pieces with specific jobs to do.

Microservices are loosely coupled and can operate independently from one another. They can be updated separately, and if one fails, it doesn't disrupt the others.

Plus, when an individual microservice requires more computing resources, the container management system can drive more processing power to that container.

Since containers take up so little space, and the management system can allocate resources as needed, microservices improve the availability and agility of your service without turning your app into a power-hungry monstrosity.

Continuous delivery

Continuous delivery is the practice of immediately deploying code changes to a testing or production environment. With cloud native applications, it’s easy to test updates in multiple environments without making them live, so developers can validate changes and correct potential problems before they reach customers.

DevOps

To fully harness the potential of cloud computing, you don’t just need to rethink the way you design your software—you need to rethink the way you organize your company and the philosophies that drive your teams.

Simply put, DevOps combines the traditionally siloed roles of development and operations.

In some cases, organizations literally combine these teams, or have software engineers dividing their responsibilities between these two areas. And they invest in tools that help speed up operations and free developers to work independently. But whatever it looks like, the aim is that employees think about and feel invested in the entire lifecycle of your application, from development to deployment.

So what does that actually look like? Typically a DevOps mindset means implementing small, frequent updates, which lets you react faster to your customers’ needs and resolve bugs quicker—because it’s easier to trace the bug back to a specific change.

Using microservice architecture helps with this, too. Since this model separates your services, it makes it easier to update individual ones without disrupting other vital functions.

Continuous delivery is also a core component of DevOps, allowing teams to thoroughly explore the implications of every change before pushing them live.

The benefits of cloud native applications

A cloud native approach to software development gives your organization several huge advantages over traditional development models—namely, scalability, agility, and resilience. It also makes it easier to deploy your application globally.

Scalability

Arguably, the biggest strength of cloud computing is its scalability. Utilizing a cloud-based architecture, companies can scale processing power up and down as needed and upgrade individual components.

If your application is connected to a public cloud, you don’t even need to invest in new hardware and infrastructure to accommodate greater usage or computing requirements. You can pursue startup projects without massive upfront costs, and you can easily scale down if things don’t go as you’d hoped.

Public clouds have their limits. But providers like Amazon Web Services, Microsoft Azure, and Google Cloud have built massive IT infrastructures, and your application likely won’t even come close to their limits. You simply pay for however much of their infrastructure you need to use.

Suppose you create a consumer IoT device. It takes off in popularity and attracts far more users than you anticipated. With a cloud-native approach, you can easily scale up your infrastructure and avoid having overloaded servers impact your user experience. You can supplement your company’s private cloud with a public one, or simply use a public cloud.

On the other hand, if you didn't take a cloud-native approach, an unexpected surge of usage or flood of new users could easily max out your infrastructure. Instead of building out your business and immediately capitalizing on the success, you're forced to try to rapidly find and deploy physical servers and infrastructure to accommodate the demand. And if that demand fades, you're left with far more owned infrastructure than you need.

Agility

When you have devices spread across a wide geographic area and performing critical functions, you can’t just shut everything down for an update, and you don’t want to release changes into the wild without being confident that they won’t cause disruptions.

Cloud native applications utilize small, rapid-fire updates to quickly respond to customer feedback and adapt to evolving needs. Using multiple virtual environments, you can fully explore how changes will impact your software without creating issues for your end users. And thanks to microservices, you can deploy updates to one service without impacting another—or even experiencing downtime for the individual service.

For example, suppose you’re developing a cold chain monitoring solution, and your largest customer requests new functionality, or shares a bug that’s been causing them problems. Or maybe a potential customer leads you to discover that with the addition of a particular feature, your application would be ideal for a type of business you hadn’t considered before. A cloud native approach makes it easy to pivot and prioritize these small changes, then test and thoroughly validate your solutions before rolling them out to customers.

Resilience

Cloud native applications have an unparalleled ability to endure. Redundancy is a core principle of cloud native design, and it enables your software to remain operational even when critical systems and infrastructure fail.

System failures are inevitable in software development. With a non-cloud native approach, a single natural disaster can temporarily disable or even permanently destroy your infrastructure. But if a storm takes out the power to a cloud service provider, they have data centers throughout the world that can pick up the slack and maintain your connectivity while they restore service to your local data center. There’s always a backup, so failures have minimal impact on your end users.

Built-in software redundancies mean there are always multiple microservices that can take over functions if a service goes down. Cloud service providers allow you to create infrastructure redundancies, where you can switch between (or create copies of) availability zones, so if a power outage disables a crucial data center, your service is already using a backup that was running in parallel. And if you use multi-network cellular connectivity, you can even switch between networks when one becomes unavailable or has a poor connection.

How cloud native relates to the Internet of Things

Most Internet of Things (IoT) applications live in the cloud. Cloud service providers make it easy for developers to focus on their software without worrying about building or investing in infrastructure. And when you deploy your devices over a large geographic area or around the globe, there’s already infrastructure in place to support them.

A cloud-based approach is the ideal way to make data transfers and roll out updates, and it also removes the need for manufacturing built-in storage on the device.

Cloud native development is the best pathway to building scalable, cost efficient, reliable IoT solutions. But there’s one aspect of cloud nativity that manufacturers often overlook: connectivity. And that’s where EMnify comes in.

Cloud native connectivity

Cloud native connectivity applies the principles of cloud computing to connectivity, using features like microservices, multi-layer redundancy, and virtualization to provide reliable connections to the cloud.

Instead of relying on a single network or carrier, emnify’s cloud native connectivity makes your devices “network agnostic,” giving them the ability to connect to whichever carrier has the strongest signal or lowest costs. With cloud native development, you can let a provider manage the hardware or the operating system, freeing you to focus on your application. With cloud native connectivity, you don’t need to juggle contracts or worry about network selection. We’ll ensure your devices have coverage wherever you deploy.

Cloud native services easily integrate with your tech stack. Our API integrates our connectivity capabilities with your operations workflows. Using emnify Data Streamer, you can get visibility into network events, service usage, and costs directly in your other applications.

Cloud service providers let you start and stop paying for service at any time. The same is true with cloud native connectivity providers like emnify. You only pay for the data you use.

As a cloud native connectivity provider, emnify’s managed IoT connectivity platform is deployed in multiple public cloud regions. So when you deploy globally, your data doesn’t have to travel across the world before it gets to your application. You can deploy your solution in multiple regions to keep data local to your customers, and improve latency. That’s not possible with a traditional operator, where data has to be routed through the provider’s home network before it reaches your customers.

Much like how cloud service providers free you from the challenges of infrastructure, emnify frees you from the challenges of connectivity. Whether you’re deploying on a local or global scale, we deliver complete end-to-end cloud connectivity. With emnify, you can apply your cloud-native philosophy to connectivity.

Let’s talk about cloud native connectivity

Connectivity is a vital part of the internet of things. If you want to be a successful manufacturer of IoT devices, you need to have a plan for connectivity from the start. At emnify, our cellular IoT experts are happy to discuss the connectivity options available and help you pick the right solution for your application.

Get in touch with our IoT experts

Discover how emnify can help you grow your business and talk to one of our IoT consultants today!

If you want to understand how emnify customers are using the platform Christian has the insights. With a clear vision to build the most reliable and secure cellular network that can be controlled by IoT businesses Christian is leading the emnify product network team.